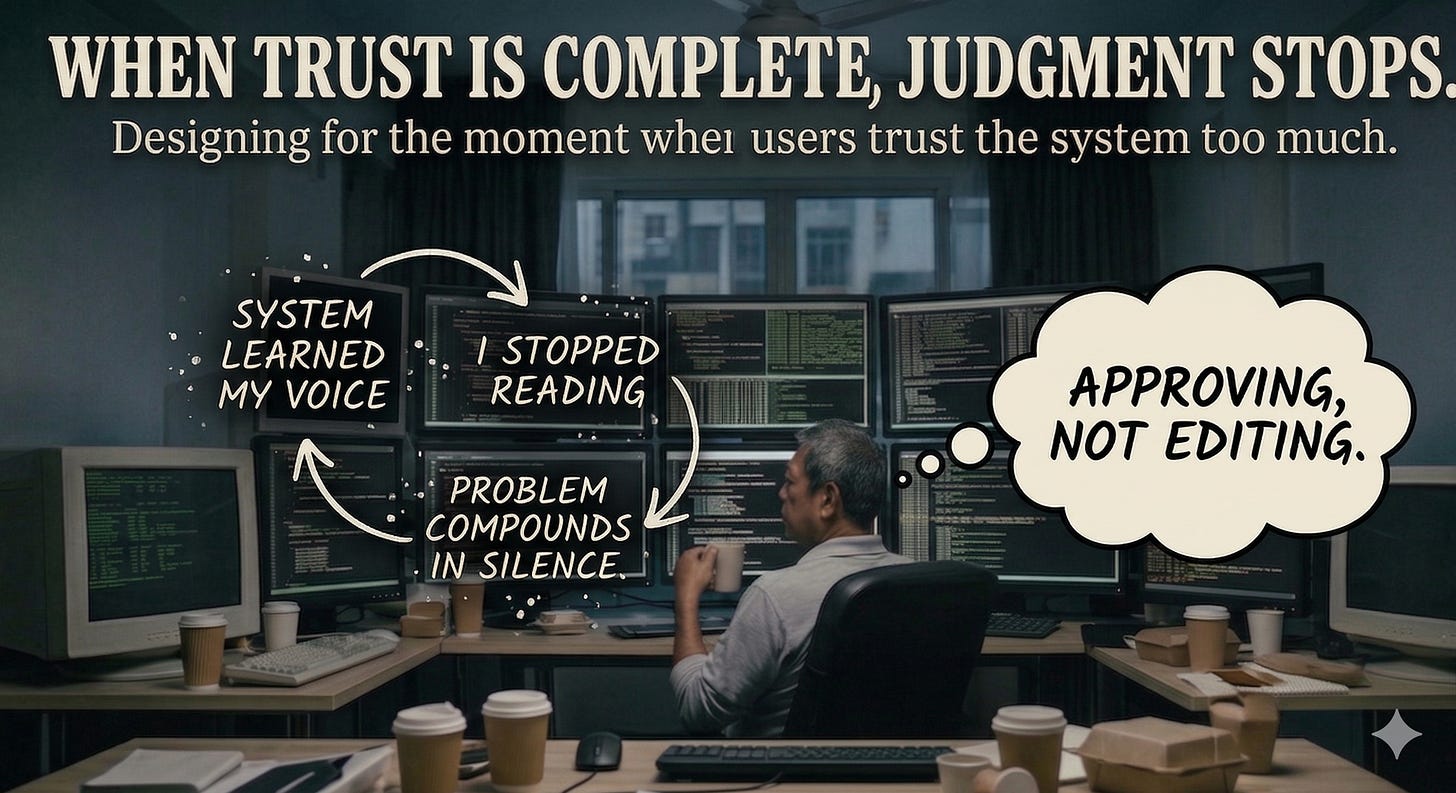

At some point, I stopped reading what I was approving.

The workflow had become clean.

Research comes in. The AI synthesizes it. A draft Product Requirement (PRD) appears. I review, adjust the framing, and move it forward. Tickets get written, scoped, pushed into Linear. The acceptance criteria look right. The language sounds like mine because I had shaped the brief that produced it.

The queue kept moving. The output kept looking right.

Then a teammate flagged something. An acceptance criteria that didn’t quite hold up. Not a disaster. Nothing that broke the sprint. Just a condition that, if you read it carefully, didn’t actually cover the edge case it claimed to.

I had read it. I just hadn’t interrogated it.

That is a different thing entirely.

I was approving. Not editing.

The distinction sounds small. It isn’t.

The Metric of Failure

For twenty years, the goal of product I built was to earn trust.

More trust meant higher conversion, lower churn, and better outcomes. Trust was the metric. You designed for it, measured it, and optimized it. If users trusted your product, you had done your job.

AI is the system where that assumption breaks.

There is a known failure mode called algorithm aversion. Users reject AI output even when it outperforms humans. They see one mistake and walk away.

Designers have spent years trying to solve it: better explanations, more transparency, progressive disclosure. The pitch is simple: build enough trust and users will act.

The opposite failure mode is the one nobody designs for.

When trust is complete, judgment stops.

The Cost of Automation Bias

Researchers call it automation bias. You trust the system so thoroughly that you outsource the part of yourself that catches errors.

The model keeps producing. The approvals keep moving. The problem compounds in silence, at the pace of the output.

That is what happened to me. I was no longer evaluating the content. I was processing it.

The question I had spent years embedding into every product I built, what is the user afraid of, had disappeared from my own workflow.

I was not afraid of anything. The output looked right. I had eliminated the friction.

Friction was the point.

On March 31, 2026, Anthropic accidentally published 512,000 lines of Claude Code’s internal source code to a public software registry.

Not a hack. Not a breach. A routine release. A debug file that should have stayed internal was accidentally bundled into the public package.

The kind of misconfiguration that happens when a process is trusted more than it is verified.

Two significant operational failures in a single week from a company that built its identity on responsible AI.

Not the work of adversaries. The result of internal processes that had accumulated too much trust in their own systems.

Institutionalizing Distrust

This is not a story about negligence. It is a story about what happens at the end of a long run of things working correctly.

When something works well, the natural response is to check it less. Every efficiency gain is a small withdrawal from the oversight account.

The withdrawals feel invisible until the account is empty and something ships that should not have.

Financial systems solved this decades ago. Not by trusting better, but by institutionalizing distrust.

Critical transactions use a maker–checker system. Not because the first person is incompetent, but because even competent people miss things when everything has been running smoothly for too long.

The lesson AI product design has yet to absorb: the goal is not to make people trust the system correctly. It is to make the system behave correctly when people inevitably trust it too much.

Build with Friction

That is a product problem.

It is not solved by adding transparency layers. It is solved by building friction back into the process deliberately.

Mandatory checkpoints. Rotating reviewers. Anomaly flags that interrupt the approval flow before it becomes automatic.

None of these make a product feel less trustworthy. They are what make it actually trustworthy over time.

The design discipline I know was built to reduce fear and remove friction. That arc holds when you are trusting a checkout flow where the cost of failure is a refund.

The cost of over-trusting a system that executes without questioning you is not bounded the same way.

The hardest problem AI creates is designing for the right amount of trust.

Not so little that the tool is ignored. Not so much that the human check disappears.

For twenty years, I asked three questions on every project.

What does the user need to know right now. What are they afraid of. What happens if they get it wrong.

With AI, those questions have to be aimed at myself first.

What am I afraid of here. What happens if I trust this completely and I am wrong. What does getting it wrong at scale look like.

I should have asked them earlier.

I was too busy approving.