Before AI, weak strategy was slow and visible.

You could see the overbuild coming.

The roadmap ballooned.

The backlog swelled.

You had time to notice.

Time to argue.

Time to cut.

Now it’s fast, polished, and expensive before anyone notices.

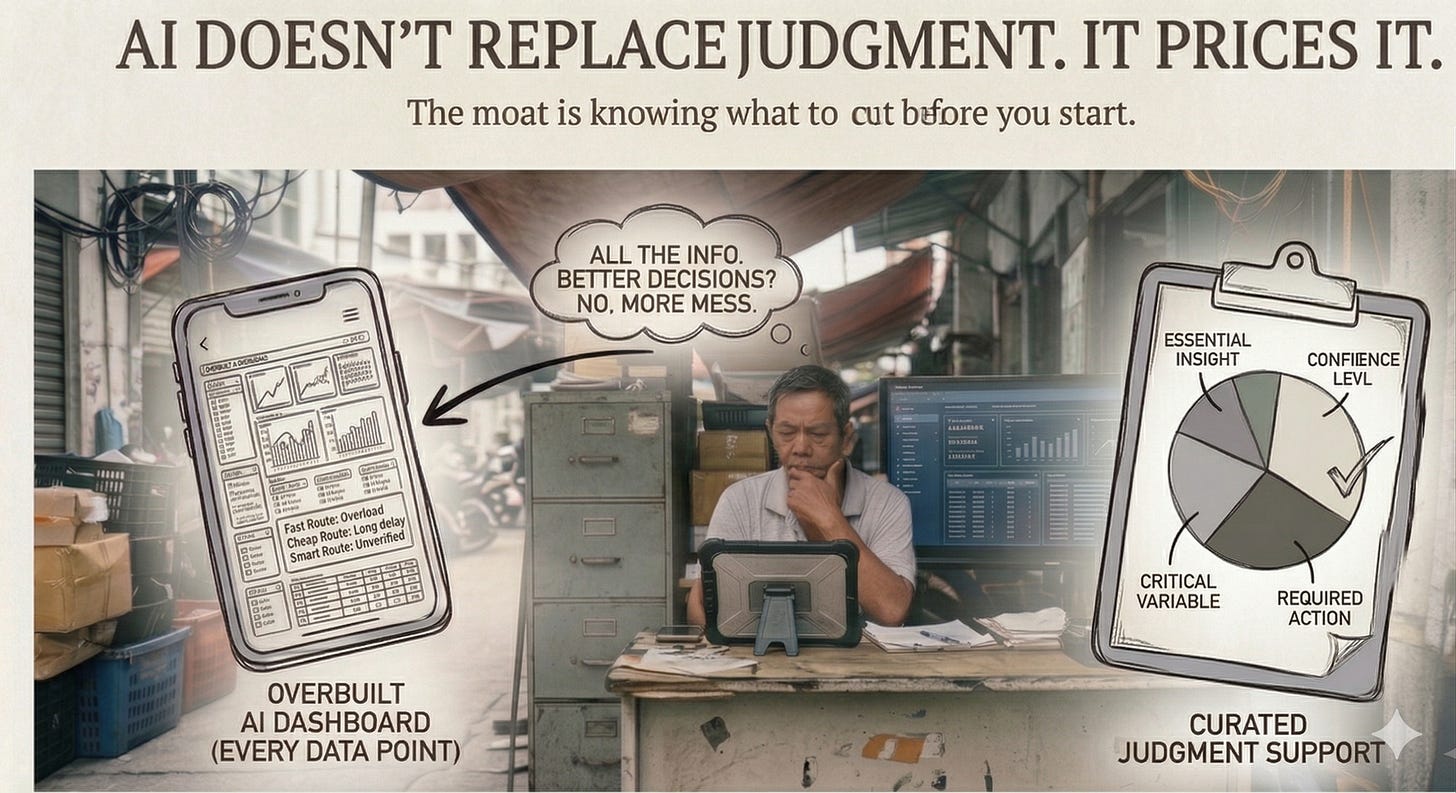

We were building a dashboard.

The team had a hypothesis: show users everything.

More data, better decisions.

AI could generate it all in an afternoon, so we did.

Every chart we could surface.

Every filter we could imagine.

Every data point the system could produce.

It looked impressive.

It was a mess.

I challenged the team with two hypotheses.

One: users want all the information so they can make better judgments.

Two: information overload kills the decision. Not the lack of data.

We tested both.

The second one won.

We cut everything that didn’t answer a hypothesis.

What remained:

one clean dashboard,

simple filters,

a plain-language report instead of graphs.

A beta user opened it.

Clicked through.

Found what he needed immediately.

“I like this,” he said.

“It’s not overwhelming.”

He didn’t know about the version we didn’t ship.

That’s what judgment looks like in the AI era.

Not the model.

Not the interface.

The decision about what not to build.

The hypothesis before the build.

The clarity about what the user actually needs to do, not what the AI is capable of showing.

There’s a cognitive pattern researchers call feature creep bias:

teams confuse capability with value.

When the cost of building drops to near zero, everything feels worth building.

The constraint used to be time and engineering hours.

That kept teams honest.

Remove the constraint and you don’t get better products.

You get bigger ones.

Anyone can vibe-code a feature-heavy product in an afternoon now.

The moat isn’t the build.

It’s knowing what to cut before you start.

This is what AI actually does to strategy.

It doesn’t eliminate the need for judgment.

It raises the price of poor judgment.

A bad hypothesis used to fail slowly,

in a sprint or two,

before too much was committed.

Now it fails at the pace of AI output:

fast, complete,

and already in staging

before the team steps back to ask

whether it was the right thing to build.

Speed without a hypothesis isn’t velocity.

It’s drift

with good visual design.

The teams winning with AI aren’t the ones prompting faster.

They’re the ones who spend more time before the prompt than after it.

Getting the question right.

Naming the assumption clearly.

Deciding what done actually means

before asking the tool to go get it.

AI gave us the speed to build everything.

Judgment tells us not to.

The tool was never the problem.

Knowing what to build with it always was.

The decision about what not to build.

The hypothesis before the build.

The clarity about what the user actually needs to do, not what the AI is capable of showing.

Anyone can vibe-code a feature-heavy product in an afternoon now.

The moat isn’t the build.

It’s knowing what to cut before you start.

AI gave us the speed to build everything.

Judgment told us not to.

The tool was never the problem.

Knowing what to build with it always was.